This has application in chemical kinetics, combustion modeling and non-linear finite element methods. The cuSolverRF library can quickly update an existing LU factorization as the coefficients of the matrix change. QR factorization is very robust, and unlike LU factorization, it doesn’t rely on pivoting. QR can be used for solving linear systems and least-squares problems.

I’ll go into detail on these in a followup post.ĬuSolverSP provides sparse factorization and solve routines based on QR factorization. cuSOLVER includes Cholesky factorization ( potrf), LU factorization ( getrf), QR factorization ( geqrf) and Bunch-Kaufmann ( symtrf), as well as a GPU-accelerated triangular solve ( getrs, potrs). For solving systems with QR factorization, cuSOLVER provides ormqr to compute the orthogonal columns of Q given A and R, and getrs to solve R. These are the most like LAPACK, in fact cuSOLVER implements the LAPACK API with only minor changes. Let’s start with cuSolverDN, the dense factorization library.

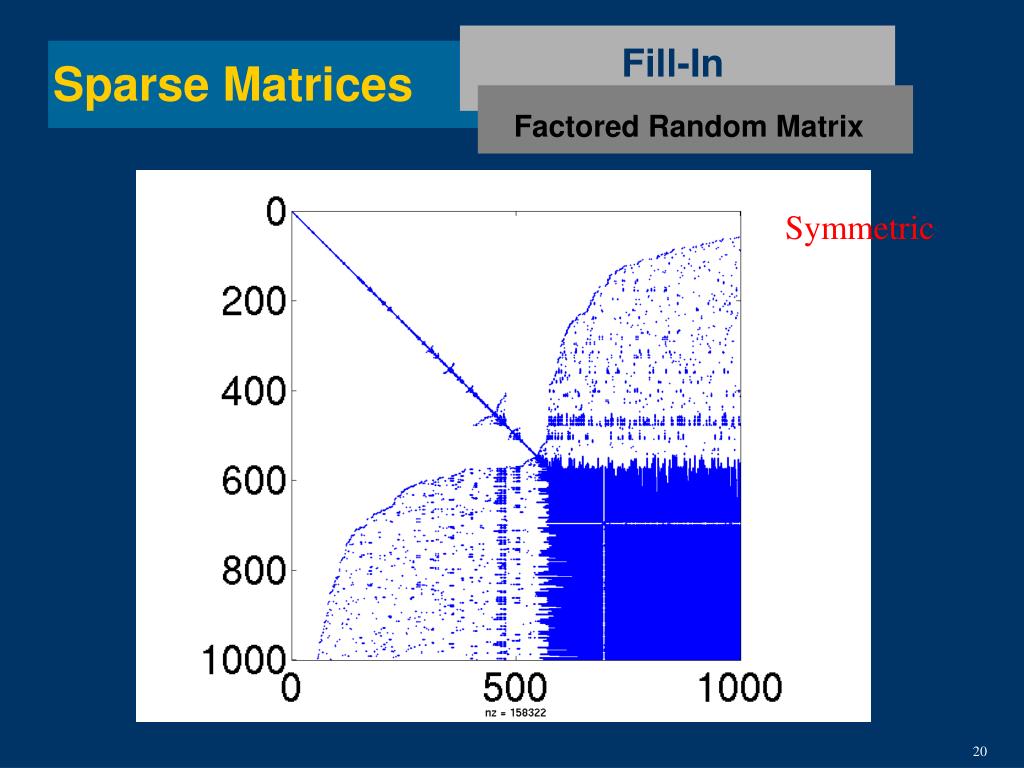

cuSOLVER has three major components: cuSolverDN, cuSolverSP and cuSolverRF, for Dense, Sparse and Refactorization, respectively. A goal for cuSOLVER is to provide some of the key features of LAPACK on the GPU, as users commonly request LAPACK capabilities in CUDA libraries. The cuSOLVER library provides factorizations and solver routines for dense and sparse matrix formats, as well as a special re-factorization capability optimized for solving many sparse systems with the same, known, sparsity pattern and fill-in, but changing coefficients. In a followup post I will cover other aspects of cuSOLVER, including dense system solvers and the cuSOLVER refactorization API. In this post I give an overview of cuSOLVER followed by an example of using batch QR factorization for solving many sparse systems in parallel. Also, for small systems, direct solvers are typically faster than iterative methods because they only pass over the matrix once. The benefit of direct solvers is that (unlike iterative solvers), they always find a solution (when the factors exist more on this later) and once a factorization is found, solutions for many right-hand sides can be performed using the factors at a much lower cost per solution. A solver for this factorization would first solve the transpose of L part, then apply the inverse of the D (diagonal) part in parallel, then solve again with L to arrive at the final answer. Figure 1 shows an example of factorization of a dense matrix.

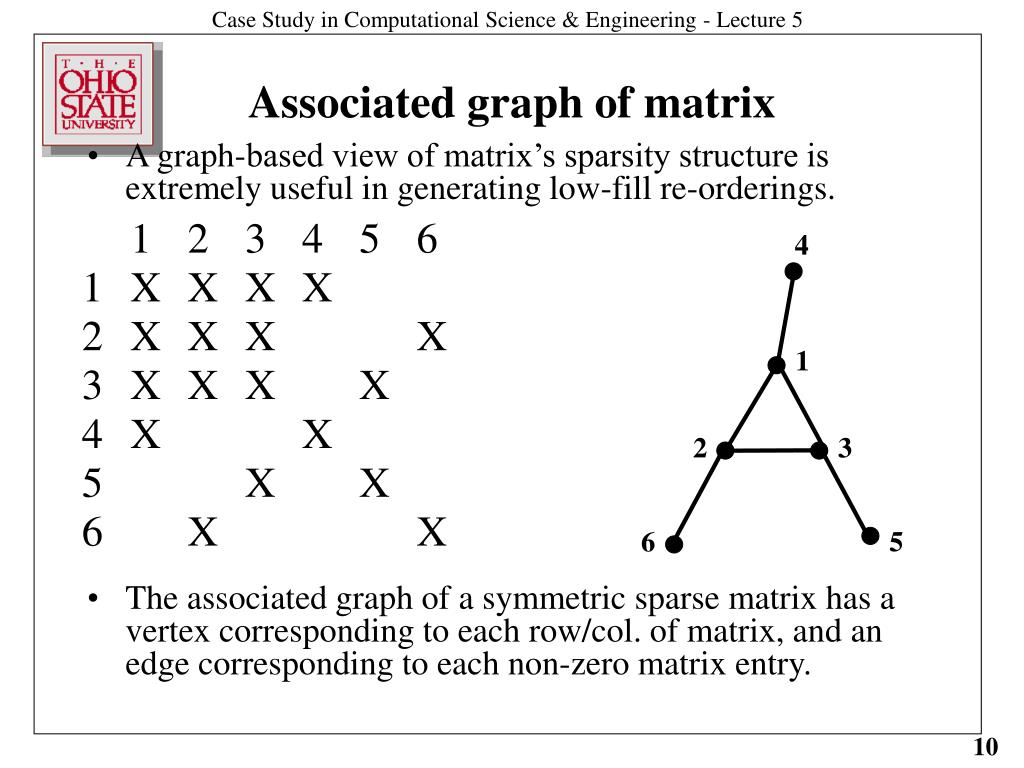

Computer vision and object detection applications need to solve many least-squares problems, so they will also benefit from cuSOLVER.ĭirect solvers rely on algebraic factorization of a matrix, which breaks a hard-to-solve matrix into two or more easy-to-solve factors, and a solver routine which uses the factors and a right hand side vector and solves them one at a time to give a highly accurate solution. Combustion models, bio-chemical models and advanced high-order finite-element models all benefit directly from this new capability. These solvers provide highly accurate and robust solutions for smaller systems, and cuSOLVER offers a way of combining many small systems into a ‘batch’ and solving all of them in parallel, which is critical for the most complex simulations today. Iterative solvers are favored for the largest systems these days (see my earlier posts about AmgX), while direct solvers are useful for smaller systems because of their accuracy and robustness.ĬUDA 7 expands the capabilities of GPU-accelerated numerical computing with cuSOLVER, a powerful new suite of direct linear system solvers. There are two types of solvers for these systems: iterative and direct solvers. A key bottleneck for most science and engineering simulations is the solution of sparse linear systems of equations, which can account for up to 95% of total simulation time.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed